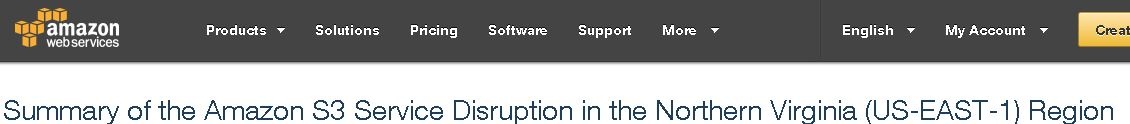

Anyone who spends any time watching news reports about technology issues is aware that there was a significant outage of Amazon Web Services (AWS) last week. There were many major organizations who experienced outages as a result of the failure and it was a little time before news got out to tell us what happened.

Amazon has been forthright in saying that it was an error in their system combined with some long time format issues which caused the problem.

"Removing a significant portion of the capacity caused each of these systems to require a full restart. While these subsystems were being restarted, S3 was unable to service requests. Other AWS services in the US-EAST-1 Region that rely on S3 for storage, including the S3 console, Amazon Elastic Compute Cloud (EC2) new instance launches, Amazon Elastic Block Store (EBS) volumes (when data was needed from a S3 snapshot), and AWS Lambda were also impacted while the S3 APIs were unavailable."

What started out as a fairly routine maintenance activity escalated into a systems delay which had a major impact on a large number of organizations.

Just a couple of the hundreds (maybe thousands) of articles which have been written to deal with the outage.

What Might We Learn From This Situation?

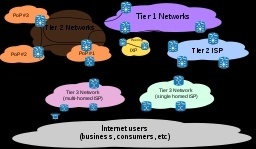

In spite of the overall reliability that such large IT systems offer there are a few things that any IT operator might learn from the situation that Amazon experienced and that led to some uncomfortable hours for many of their customers.

- No matter how rigorous your design and procedures are unforeseen actions can and will happen when you are dealing with IT systems. Understanding how to deal with them by thinking ahead about what is most vulnerable in your operations is critical planning.

- Even large well resourced organizations can be caught out by not continually understanding how their systems have grown over time. Amazon says in their explanation that one of the things which contributed to the problem was the growth of their systems built upon older components that had not been restarted for a long time. When the restart was needed to deal with an unplanned occurrence it took much longer than expected. How vulnerable are your systems to this kind of delayed response?

- Sometimes it is hard to find out what is happening when your systems go down. For many of the organizations and the world of users there was a break down of information about what was happening and where the problem was. Isitdownrightnow.com one of the sites which is commonly used to identify individual site issues was itself affected. It was through Twitter that many people learned about the outage and that the problem was an external one. What information tools do you have to finding out if problems are localized or widespread? Have you articulated a well planned checklist so resources are not wasted if the fault is not internal?

- Personally I was trying to use an online News aggregator which I check daily and got an "No Content" message from their page. Unfortunately for them I thought the problem was caused by a push they had on to encourage users to upgrade to a new version of their service they were promoting. I totally misread the situation and for a few hours was really frustrated by their service and support. Wrong response from me as they were impacted by something that was beyond their control. This should be a caution for us all as we can easily come to a wrong conclusion about what is happening in an IT situation when we don't have the facts.

- Know what systems, services and organizational needs are affected by each and all of your IT structures. Is your VOIP affected by something you are not aware of due to its need for Internet connection? Could you financial systems be affected if your bank connection was not available for an extended period? How many people would be idled if you lost a 'cloud' connection to key software? How much would the downtime cost/ What customer related activities might suffer?

Having answers to these types of questions is an important part of any business plan. In our highly digitized world it may be necessary to maintain some analog systems which could keep you functioning if the digital infrastructure has to be shut down.

I know of one local company that actually maintains a paper based 'emergency' system for all of their order desks so that customers would not be totally inconvenienced if the electronic systems they normally rely on got shut off. They have a formalized recovery procedure on how to capture the data from the paper system into the electronic one when it is again back on line, without creating duplication, or inconveniencing their customers. Can your organization says they are this prepared for a failure?

Luckily, IT systems are very robust normally, and system administrators take the need for security, backup, redundancy and planning seriously. This means that for most organizations they can operate each day without a threat of disaster hanging over their heads.

However, as the recent AWS, S3 issue has shown even the top professionals in the space can be caught out and even with a pretty quick recovery there can be a large amount of inconvenience, confusion and disruption.

Lee K

Read More